Reducing Quote Normalization Time in Capital Projects

In capital project procurement, quote normalization is the work that happens between receiving vendor submissions and making an award decision. It is often the most time-consuming step in the sourcing cycle—and also the step most likely to introduce errors that create disputes after award. When normalization is slow, project schedules slip. When normalization is incomplete, scope gaps become change orders.

This article defines what quote normalization involves in capital project contexts, identifies why it takes so long, and provides a structured approach to reducing normalization time without reducing evaluation rigor.

Key Concepts

| Term | Definition |

|---|---|

| Quote normalization | The process of transforming vendor submissions from their native formats into a standardized structure that enables direct, line-by-line comparison across bidders |

| Bid leveling | A related term used in engineering and construction contexts; synonymous with quote normalization—adjusting bids to a common scope baseline before price comparison |

| Scope deviation | A difference between what the RFQ specified and what a vendor included or excluded in their submission |

| Commercial assumption | A vendor’s stated condition or qualification that modifies the basis on which their price applies (e.g., payment terms, escalation clauses, warranty limitations) |

| RFQ (Request for Quotation) | A formal document issued to vendors requesting pricing and technical details for a defined scope of work or materials supply |

| Bid tabulation | A structured comparison document that presents normalized vendor data side-by-side, enabling evaluation across multiple bidders on consistent criteria |

Why Quote Normalization Takes So Long in Capital Projects

Key Takeaway: Quote normalization is slow because vendors submit in incompatible formats, scopes vary from the RFQ, and commercial assumptions are buried in document text—all of which require manual identification and reconciliation.

Capital project RFQs typically generate submissions from 3-8 vendors, each organized according to their internal quoting system. A single RFQ package may yield:

- Vendor A: a 45-tab Excel workbook with line items organized by their cost codes

- Vendor B: a multi-page PDF with narrative pricing and embedded assumptions

- Vendor C: an email with summary pricing and a separate technical exceptions list

- Vendor D: a structured response that addresses the RFQ sections out of order with footnoted qualifications

Before a single price comparison can occur, the procurement team must:

- Extract every line item from each submission

- Map each item to the RFQ line item structure

- Identify scope inclusions and exclusions relative to the RFQ baseline

- Identify commercial assumptions and exceptions

- Quantify the cost impact of scope deviations (adding excluded scope at assumed unit rates, or removing included scope the RFQ did not require)

- Restate each bid on the adjusted, normalized scope basis

Each step involves judgment calls. Errors in any step can result in a cost comparison that does not reflect actual differences between vendors—leading to an award decision based on distorted data.

Time Allocation in Manual Quote Normalization

| Activity | Typical Time Share | Primary Risk |

|---|---|---|

| Data extraction from vendor documents | 25-35% | Transcription errors, missed line items |

| Scope deviation identification | 20-30% | Incomplete identification of exclusions |

| Commercial assumption review | 15-20% | Missed assumptions that affect price basis |

| Scope adjustment and cost impact calculation | 20-25% | Incorrect unit rate assumptions |

| Bid tabulation preparation | 10-15% | Formatting inconsistencies between reviewers |

Root Cause Analysis: Why Vendor Submissions Are Non-Comparable

Key Takeaway: The primary driver of normalization time is not vendor behavior—it is RFQ design. RFQs that do not specify submission format, data structure, and required fields generate submissions that cannot be compared without extensive manual work.

Most of the variation in vendor submission formats is preventable. Vendors submit in inconsistent formats because the RFQ allows it. When the RFQ specifies that pricing must be submitted on a provided line-item template, in defined units, with explicit scope inclusions and exclusions identified in a designated section, vendors comply. The submissions are not uniform by accident—they are uniform because the RFQ required uniformity.

Common RFQ design failures that create normalization problems:

- No pricing template provided: Vendors structure pricing according to their own cost model, not the project’s work breakdown structure

- No required format for scope exceptions: Vendors include exceptions in narrative form, embedded in technical sections, or not at all—making systematic identification impossible

- Ambiguous scope descriptions: When the RFQ scope is ambiguous, vendors make different assumptions, creating scope variation that cannot be normalized without clarification

- No specified units: Vendors price in different units ($/unit vs. lump sum vs. $/hour) that require conversion before comparison

RFQ Design Quality vs. Normalization Time

| RFQ Design Element | Present | Absent |

|---|---|---|

| Structured pricing template | Vendors fill identical fields; extraction is rapid | Vendors use own format; extraction requires mapping per vendor |

| Required exceptions section | Exceptions are in one place; review is efficient | Exceptions are scattered; identification requires full document review |

| Unambiguous scope description | Minimal scope variation; fewer deviations to normalize | High scope variation; many deviations to identify and price |

| Specified units of measure | Direct comparison is possible | Unit conversion required per vendor |

| Commercial terms template | Assumptions appear in designated fields | Assumptions buried in narrative; manual identification required |

Improving RFQ design is the highest-leverage intervention for reducing normalization time. It is also the lowest-cost: better templates require effort to create once and reduce normalization effort on every subsequent bid.

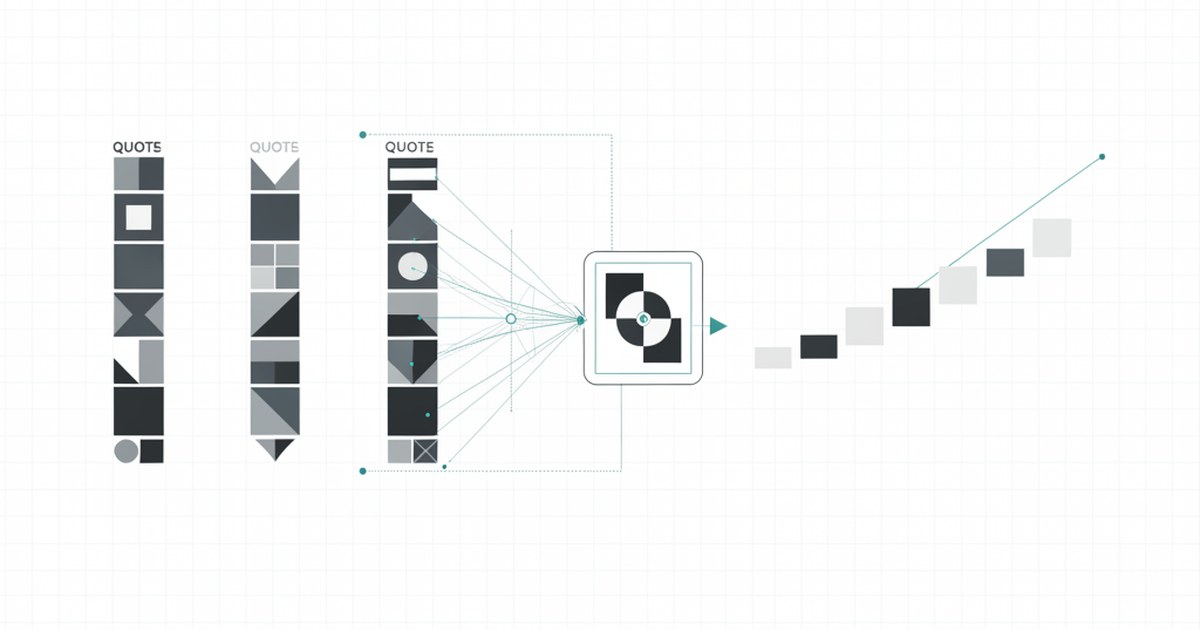

Approach 1: Automated Data Extraction and Normalization

Key Takeaway: Automated extraction eliminates the most time-consuming and error-prone step in manual normalization—transcribing data from vendor documents into a comparison structure.

Modern procurement platforms provide AI-assisted data extraction that reads vendor submissions—PDFs, Excel files, email text—and maps line items to the RFQ structure automatically. The productivity impact is substantial: a multinational construction firm that implemented automated extraction reduced quote normalization time by 50%, enabling procurement analysts to focus on evaluating deviations rather than transcribing numbers.

Automated normalization tools address:

- Line item extraction: Parse vendor documents to identify and extract pricing line items, quantities, and units without manual transcription

- Scope mapping: Match vendor line items to RFQ line items using semantic matching and configurable mapping rules

- Deviation flagging: Identify items present in the RFQ but absent from the vendor submission (potential exclusions) and items present in the submission but absent from the RFQ (potential additions)

- Assumption detection: Flag narrative text that contains qualifying language (“assumes,” “excludes,” “subject to,” “based on”) for human review

The human role shifts from data entry to judgment: reviewers evaluate flagged deviations and assumptions, rather than finding them.

Approach 2: Structured RFQ Templates and Submission Standards

Key Takeaway: Standardized submission requirements reduce normalization time more reliably than any technology, because they prevent the variation that creates normalization work in the first place.

A structured submission standard includes:

- Pricing template: A provided spreadsheet or form in which vendors enter their pricing, organized by the project’s work breakdown structure or bill of materials. Vendors cannot add rows; they can only fill the defined fields.

- Scope exceptions form: A dedicated section where vendors must explicitly list any RFQ requirements they are not including, with a corresponding line item reference and rationale.

- Commercial assumptions form: A standardized table where vendors list commercial qualifications—payment terms, escalation clauses, warranty terms, currency assumptions—in defined fields rather than narrative prose.

- Clarification request protocol: A defined process and deadline for vendors to request clarification before submission, reducing scope ambiguity that would otherwise create deviations.

A mid-sized engineering firm that implemented standardized submission templates across its project portfolio reported a 30% reduction in normalization time. Vendors also responded positively—structured templates reduce the effort required to prepare competitive bids, which increases bid participation rates.

Approach 3: Pre-Bid Alignment with Vendors

Key Takeaway: Scope and format questions addressed before bid submission eliminate normalization work that would otherwise be required after submission.

Pre-bid meetings and clarification protocols create opportunities to resolve ambiguity before it becomes a normalization problem. A structured pre-bid process includes:

- Pre-bid meeting: A meeting with all invited vendors before submission deadline to review RFQ scope, submission requirements, and evaluation criteria. Questions and answers are documented and issued to all vendors as addenda.

- One-on-one clarification sessions: For complex capital projects, individual sessions with vendors to address specific scope questions that do not need to be shared with all bidders

- RFQ addendum process: A formal mechanism for issuing scope clarifications or corrections to all vendors simultaneously, ensuring consistent scope basis across submissions

Infrastructure project teams that implemented structured pre-bid alignment consistently report 35-45% reductions in post-submission clarification rounds and normalization time, because scope questions are resolved before they become submission discrepancies.

Approach 4: Real-Time Collaboration Platforms for Bid Review

Key Takeaway: Centralizing bid review on a shared platform eliminates the coordination overhead of distributed normalization—reviewers working in parallel rather than sequentially, on the same document.

In capital projects with geographically distributed project teams, bid review is often serial: the commercial team reviews first, marks up their copy, and passes it to the technical team. Version control failures, conflicting markups, and coordination delays add cycle time that has nothing to do with the complexity of the bids.

A centralized bid review platform enables:

- Simultaneous access: Multiple reviewers work on the same document instance simultaneously, with each reviewer’s annotations visible to others in real time

- Role-based review: Commercial reviewers see pricing fields; technical reviewers see scope and specification fields; access controls prevent cross-contamination of evaluations before defined review stages

- Integrated clarification tracking: Clarification requests to vendors are issued from the platform and responses are logged against the relevant line items

- Audit trail: All review activity—annotations, changes, clarification requests, resolution decisions—is recorded automatically for defensibility

A multinational manufacturing company that implemented centralized bid review reduced normalization cycle time by 25% while improving inter-reviewer consistency, because reviewers could see each other’s findings rather than discovering the same deviations independently.

Approach 5: Standardized Normalization Methodology

Key Takeaway: Inconsistent normalization methodology across projects and reviewers creates re-work and auditability problems. A documented standard methodology applied consistently eliminates both.

Without a documented normalization methodology, each project team makes its own decisions about how to handle scope deviations, what unit rates to use for pricing excluded items, and how to treat commercial assumptions. The result: normalization decisions that cannot be audited, compared across projects, or defended to stakeholders who question the award.

A standard normalization methodology documents:

| Decision | Standard Approach |

|---|---|

| How to price an item excluded by a vendor | Use the project estimate unit rate; document assumption |

| How to price an item included by a vendor beyond RFQ scope | Identify as potential add-on; flag for evaluation team to decide whether to include or exclude in comparison |

| How to handle commercial assumptions that affect price basis | Restate the price on the standard commercial basis; document adjustment |

| How to handle unit rate differences | Convert all submissions to the same units; document conversion factors |

| How to document normalization decisions | Structured decision log with RFQ reference, deviation description, adjustment applied, and reviewer |

Standardization ensures that two reviewers applying the methodology to the same set of bids will reach the same normalized result—which is the minimum requirement for a defensible award decision.

Measuring Quote Normalization Performance

Track these metrics to identify where normalization time is being consumed and where improvements are delivering results:

| Metric | How to Measure | Benchmark |

|---|---|---|

| Total normalization cycle time | Days from bid receipt to completed bid tabulation | Varies by project complexity; track trend |

| Data extraction time per bid | Hours spent extracting line items from each vendor submission | Target: reduction of 50%+ with automation |

| Scope deviation rate | Number of deviations identified per vendor per RFQ | High rate signals RFQ design problems |

| Post-normalization clarification rounds | Number of clarification rounds required after submission | Target: 1 or fewer |

| Bid tabulation rework rate | % of bid tabulations requiring material revision after initial completion | Target: under 10% |

Frequently Asked Questions: Quote Normalization in Capital Projects

Q: What is the difference between quote normalization and bid leveling?

The terms are used interchangeably in most contexts. “Bid leveling” is more common in engineering and construction; “quote normalization” is more common in manufacturing and industrial procurement. Both refer to the same process: adjusting vendor submissions to a common scope and commercial basis to enable direct comparison.

Q: Should procurement normalize bids before or after sharing with technical reviewers?

Commercial normalization (pricing adjustments for scope deviations) and technical normalization (scope clarification) should be coordinated but can occur in parallel. Technical reviewers identify what was excluded or included; commercial reviewers price the adjustments. Separating technical and commercial evaluation until normalization is complete prevents price anchoring from influencing technical assessment.

Q: How do you handle a bid where the vendor’s scope deviation is so large that the normalized comparison is not meaningful?

Issue a clarification request to the vendor asking them to resubmit on the correct scope basis. If the deviation is material enough that normalizing it requires assumptions with large cost uncertainty, the normalized comparison will be unreliable. A resubmission on the correct scope is more defensible than a heavily adjusted comparison.

Q: What automation tools exist specifically for capital project bid normalization?

Dedicated procurement platforms for engineering and construction (including tools like Purchaser) provide structured RFQ workflows, automated data extraction, scope deviation flagging, and bid tabulation generation. These tools are designed for the multi-vendor, multi-line-item complexity of capital project procurement and reduce normalization time substantially compared to spreadsheet-based approaches.

Q: How do you ensure normalization decisions are defensible in a post-award dispute?

Document every normalization decision: the deviation identified, the adjustment applied, the basis for the adjustment (unit rate source, assumption rationale), and the reviewer who made the decision. A complete normalization decision log, linked to the specific line items in the bid tabulation, provides the audit trail required to defend the award in a dispute or regulatory review.

Summary: Reducing Quote Normalization Time in Capital Projects

| Approach | Time Reduction Potential | Implementation Complexity | Priority |

|---|---|---|---|

| Improve RFQ design (templates, scope clarity) | 25-40% | Low (process change, no new technology required) | Highest—start here |

| Implement automated data extraction | 40-60% on extraction step | Medium (platform required) | High |

| Pre-bid alignment and clarification protocol | 30-45% on post-submission clarifications | Low (process change) | High |

| Centralized bid review platform | 20-30% on review coordination | Medium (platform required) | Medium |

| Standardized normalization methodology | Reduces rework and re-review | Low (documentation effort) | Medium |

The highest-leverage intervention is improving RFQ design—it is free, immediate, and prevents the variation that makes every downstream step harder. Automation amplifies the gains from better RFQ design but cannot compensate for RFQs that invite unstructured responses. Organizations that address both will see the largest reductions in normalization time and the most defensible award decisions.